Why Knowledge Modules Are the Hardest Part of ODI Migration

If you have ever attempted to migrate an Oracle Data Integrator (ODI) project to a modern platform, you have encountered the Knowledge Module problem. On the surface, an ODI interface (or mapping in ODI 12c) looks like a straightforward source-to-target data flow. But beneath every interface is a Knowledge Module — a code-generation template that controls how that data flow is physically executed.

Knowledge Modules are not mappings. They are not transformation logic in the traditional sense. They are meta-programs: templates that generate and execute SQL, DDL, DML, and shell commands at runtime. An IKM does not just “insert data into a target table.” It creates staging tables, generates INSERT/SELECT statements with dynamically resolved column lists, executes MERGE or UPDATE statements with conflict resolution logic, manages error tables, and cleans up temporary objects — all through a multi-step template that interpolates interface metadata at execution time.

This is what makes KM translation so difficult: you are not converting code — you are converting a code generator.

A Knowledge Module is to an ODI interface what a compiler is to source code. You cannot migrate the source code without understanding what the compiler does with it.

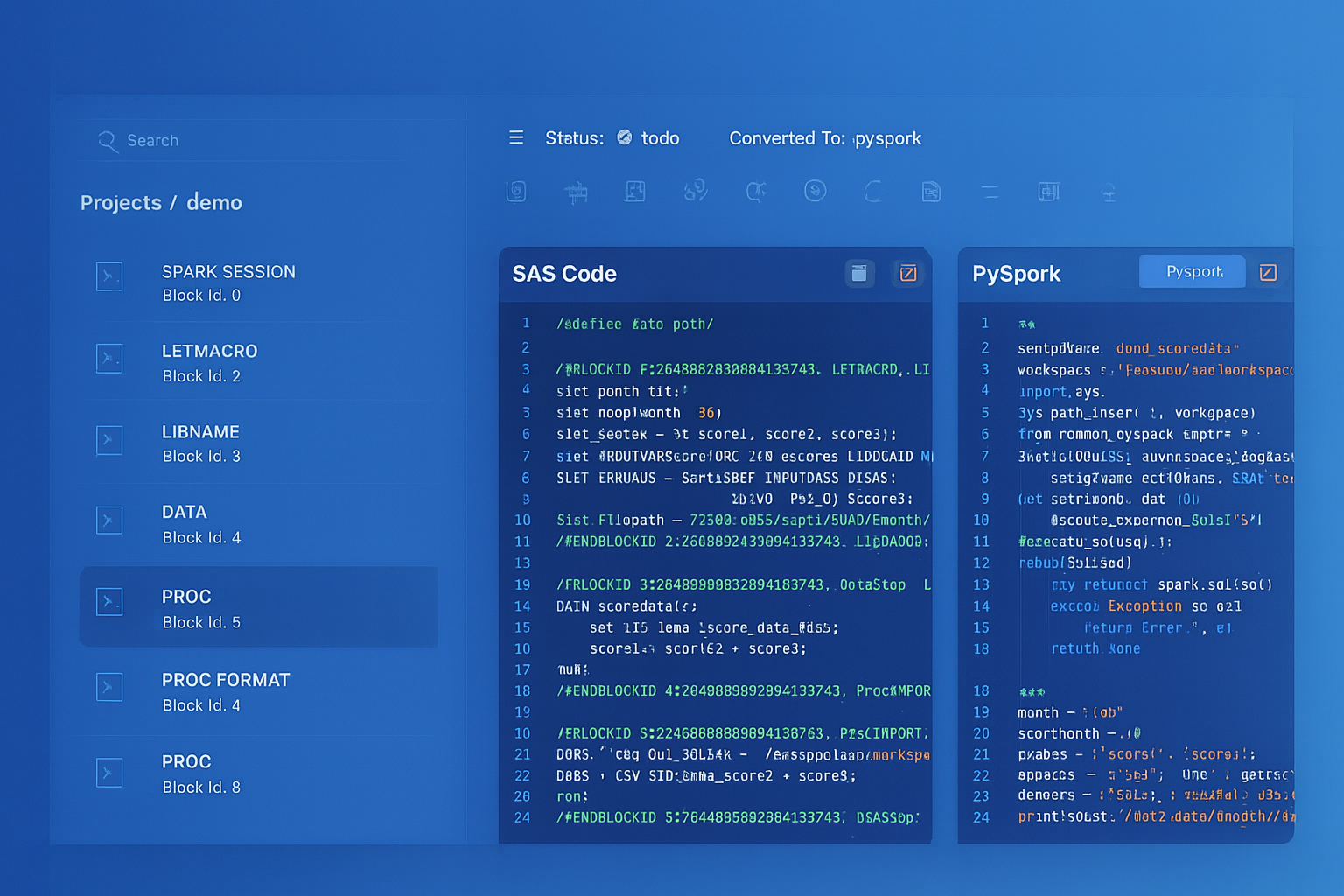

Oracle ODI to dbt migration — automated end-to-end by MigryX

The Five Types of Knowledge Modules

ODI defines five categories of Knowledge Modules, each responsible for a different phase of the data integration lifecycle:

Integration Knowledge Module (IKM)

The IKM controls the final integration step: how data is written from the staging area to the target table. Common IKMs include IKM Oracle Incremental Update, IKM SQL to SQL Append, and IKM Oracle Merge. An IKM typically contains 8–20 steps that execute in sequence, generating DDL and DML specific to the target database technology.

Example IKM steps (simplified from IKM Oracle Incremental Update):

- Create a flow table (C$ temporary staging table)

- Insert source data into the flow table

- Analyze the flow table for optimizer statistics

- Update existing rows in the target

- Insert new rows into the target

- Drop the flow table

Loading Knowledge Module (LKM)

The LKM handles data movement from a source technology to the staging area when the source and target are on different platforms. For example, LKM File to SQL reads a flat file and loads it into a staging table in the target database. LKM SQL to SQL (Built-In) uses JDBC to transfer data between heterogeneous databases. The LKM generates the SQL or commands needed to bridge the technology gap.

Check Knowledge Module (CKM)

The CKM implements data quality checks. When an ODI interface has constraints defined (not-null checks, reference checks, condition checks), the CKM generates SQL to validate data against these constraints and route failing rows to an error table (E$ table). This is ODI’s built-in data quality framework.

Journalizing Knowledge Module (JKM)

The JKM implements Change Data Capture (CDC). It creates journal tables (J$ tables), triggers, or views that track inserts, updates, and deletes in source tables. The JKM is deeply database-specific, often relying on features like Oracle LogMiner, database triggers, or timestamp-based change detection.

Reverse-Engineering Knowledge Module (RKM)

The RKM extracts metadata from source systems to populate ODI’s data model definitions. While RKMs are important during ODI development, they are typically not migrated because the target platform has its own metadata discovery mechanisms.

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

How Knowledge Modules Generate SQL

ODI Knowledge Modules embed complex logic using a proprietary substitution API — a template language that dynamically generates SQL at runtime based on metadata context. This makes KMs fundamentally different from static SQL scripts and significantly harder to migrate.

Each KM step contains a template that references interface metadata — column lists, table names, transformation expressions, join conditions — and produces the actual DDL and DML that the database executes. The generated SQL is different for every interface that uses the KM, because the template resolves against that interface’s specific columns, expressions, and configuration options.

This dynamic code generation is what makes manual KM migration impractical at scale. A single IKM template might generate hundreds of distinct SQL scripts across an ODI project, each tailored to a different interface’s metadata. Translating the template once is not enough — you must understand what it produces for every interface it serves.

MigryX’s Approach to KM Translation

MigryX does not attempt a line-by-line translation of KM templates. Instead, it extracts the intent of each KM and generates semantically equivalent code in the target language.

MigryX automatically identifies the integration pattern each KM implements and generates semantically equivalent cloud-native code — whether the target is PySpark, Snowflake, dbt, or Databricks. This intent-based approach means MigryX handles the full spectrum of KM behaviors, from simple append operations to complex multi-step integration patterns, without requiring manual analysis of each KM template.

Consider the complexity of a typical IKM Oracle Incremental Update pattern:

A typical incremental update IKM generates a multi-step SQL workflow: create a temporary staging table, load filtered source data with transformations applied, update existing rows in the target based on key matching, insert new rows that do not yet exist, and finally drop the staging table. Each step depends on interface-specific metadata — column lists, transformation expressions, key columns, and filter conditions — all resolved dynamically from the KM template.

This kind of multi-step, metadata-driven orchestration is exactly what makes manual translation so error-prone. A single missed step or incorrectly mapped column can cause silent data corruption that only surfaces weeks later in downstream reports.

MigryX understands the semantic intent behind each KM pattern — whether it is an incremental load, full refresh, or SCD implementation — and generates equivalent cloud-native code using modern merge patterns. The generated code eliminates unnecessary staging artifacts (temporary tables, manual cleanup steps) and leverages platform-native capabilities like in-memory DataFrames, Delta Lake merge operations, or Snowflake MERGE statements, producing cleaner and more maintainable pipelines.

Maintaining Transformation Semantics During KM Translation

MigryX validates the converted code at multiple levels — from syntax correctness through semantic equivalence — ensuring the migrated pipeline produces identical results.

For each converted interface, MigryX generates a semantic equivalence report documenting every pattern translation and behavioral decision made during conversion. This gives migration teams full traceability from the original KM behavior to the target implementation.

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Handling CKM and JKM Patterns

While IKMs and LKMs receive the most attention during migration, CKM and JKM patterns also require careful translation:

CKM Translation: Data Quality Checks

ODI CKMs generate SQL that validates data against constraints and routes failing rows to error tables. In the target platform, this maps to:

- dbt: dbt tests (

schema.ymltests for not-null, unique, accepted-values, relationships) plus custom test macros for complex conditions. Failing rows can be captured using thestore_failuresconfiguration. - PySpark: DataFrame assertions and quality check functions that filter rows failing validation into a separate error DataFrame, which is persisted for audit purposes.

- Great Expectations: For organizations adopting a dedicated data quality framework, CKM constraints translate naturally to Great Expectations suites.

JKM Translation: Change Data Capture

JKM patterns are the most database-specific of all KMs. An Oracle JKM might use triggers and journal tables (J$ tables), while a SQL Server JKM might use Change Tracking or CDC features. In the target platform:

- Delta Lake: Delta’s

MERGEwithwhenMatchedDeletehandles CDC merge patterns. Delta’s time travel feature provides an alternative to journal tables for point-in-time queries. - Snowflake Streams: Snowflake’s native Streams feature tracks changes on tables and provides a change log that replaces ODI journal tables.

- Debezium + Kafka: For real-time CDC needs, organizations may replace batch JKM patterns with Debezium-based log capture feeding into a streaming pipeline.

Testing KM-Derived Pipelines

Translated KM logic must be rigorously tested because KMs encode the most operationally sensitive aspects of a data pipeline: how data physically moves, how conflicts are resolved, and how errors are handled. Testing should cover three dimensions:

Functional Testing

For each converted interface, execute the new pipeline against a representative dataset and compare results with the original ODI execution. Validate:

- Row counts: Source row count, staging row count, and target row count must match between old and new.

- Column checksums: Compute a checksum (e.g., MD5 hash of concatenated columns) for every row in the target and compare between old and new outputs.

- Edge cases: Null handling, empty strings, boundary dates, Unicode characters, and numeric precision must produce identical results.

Integration Pattern Testing

Test the specific integration pattern (append, upsert, merge, SCD) under various conditions:

- Initial load: Empty target, full source load.

- Incremental load with new rows: Verify inserts are applied correctly.

- Incremental load with changed rows: Verify updates are applied to the correct rows with the correct values.

- Incremental load with deleted rows: If the original KM handled deletes (soft or hard), verify the same behavior in the target.

- Idempotency: Running the pipeline twice with the same input should produce the same result as running it once.

Performance and SLA Testing

KMs often include performance-oriented features: parallel hints, batch commit intervals, statistics gathering, and index management. The converted pipeline must meet the same SLA requirements:

- Execution time: Compare wall-clock time between old and new pipelines for representative data volumes.

- Resource utilization: Monitor CPU, memory, and I/O on the target platform to ensure the pipeline runs within allocated resources.

- Concurrency: If multiple interfaces run in parallel in production, test the converted pipelines under the same concurrency conditions to detect locking, resource contention, or race conditions.

A KM translation that produces correct results but misses the SLA window is not a successful migration. Performance testing must be part of the validation plan, not an afterthought.

Key Takeaways

Knowledge Modules are the most technically demanding aspect of any ODI migration. They are code generators, not code — and translating them requires understanding both the template system and the runtime behavior it produces. The essential principles are:

- KMs are code generators, not code: You cannot simply re-write a KM template in another language. Each template produces different SQL for every interface it serves, making manual translation impractical at scale.

- Intent matters more than syntax: The goal is behavioral equivalence. A successful migration preserves what the KM does — not how it is written.

- Modern platforms eliminate unnecessary staging: Multi-step create/load/transform/drop patterns from Oracle-era KMs can often collapse into a single operation on platforms like Spark or Snowflake.

- Specialized tooling is essential: The combination of proprietary template syntax, metadata-driven code generation, and database-specific optimizations means KM translation requires purpose-built automation — not manual re-coding.

- Test the integration pattern: Functional testing alone is insufficient. Test initial loads, incremental loads, conflict resolution, and idempotency explicitly.

- Validate performance: KMs often contain database-specific optimizations. The target code must meet the same SLA requirements under production-scale data volumes.

Organizations that invest in understanding their KMs before migration — rather than treating them as opaque configuration — consistently achieve faster, higher-quality migrations with fewer post-cutover issues.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo